This article is a repost of an article authored by Josep Alvarez on LinkedIn.

I recommend diving into

's works for a fresh perspective on the current Gen AI hype and a critical view of Big Tech. A renowned American psychologist and cognitive scientist, his research at the intersection of cognitive psychology, neuroscience, and artificial intelligence is insightful and highly relevant in today's AI landscape. His critical views on Big Tech's practices and the ineffectiveness of current policies are also a valuable addition to the discourse.

In his lecture at the Starmus Festival in Bratislava some weeks ago, Marcus passionately explored the current challenges and trends in Artificial Intelligence.

Let me share some relevant takeaways from his presentation:

The current most popular approach to AI, Generative AI, is just one of the possible approaches. It has fundamental problems, i.e., it is energy inefficient, prone to hallucinations, has an ingrained risk of biases and plagiarism linked to the training data, and even has a personality problem not picturing good mental health (Speed, 2023). Marcus devised the moniker “rough draft AI” to describe this approach.

Considering these challenges, it is crucial to recognise that an unstable AI, unanchored in reality, brings with it a multitude of immediate risks that we must be mindful of (namely Disinformation, Market manipulation, Accidental misinformation, Defamation, Nonconsensual deepfakes, Accelerating crime, Cybersecurity and bioweapons, Bias and discrimination, Privacy and data Leaks, Intellectual Property taken without consent, Over-reliance on unreliable systems, Environmental costs).

While new regulations are expected to be issued, big tech companies are leading the current path on behalf of humanity. The current direction of travel triggers a debate about the use of open foundation models and their potential to heighten the risk of deliberate misuse, leading to adverse effects on society (LeCun, 2024)(Krier, 2023) (Hinton, 2023) (Esvelt, 2023).

Gary warned us about the risks of big tech's strong influence on policy decisions about AI bordering a regulatory capture scenario (Schaake, 2023) (Bilge, 2024).

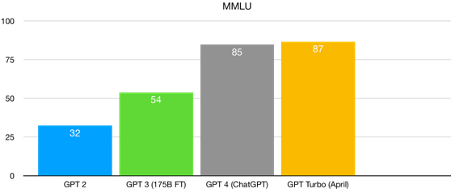

Beyond the pervasive presence of Gen AI in the media, we may have reached a point of diminishing returns for the current paradigm of rate improvement in LLMs (Large Language Model). Marcus shared a plot of the MMLU (Massive Multitask Language Understanding) benchmark, showing a performance plateau on that evolution:

Even more fascinating in his lecture was the analogy of the two main AI approaches to Kahneman's two human systems of cognition (Kahneman, 2011) and their similar features:

The beauty of the human mind is its ability to combine both cognition systems. Meanwhile, both AI approaches (Generative and Symbolic) have been working independently, trying to cover all real-life use cases independently and with limited results. Even worse, the intellectual animosity (i.e. fights over grants, resources, and prestige) between the two AI research communities may have delayed the field, possibly by decades. Ideally, if we could build an AI that combines both approaches, we would create a model that is learnable, data-efficient, interpretable, reliable, verifiable, and grounded in facts—an approach capable of reasoning about human values.

Marcus suggested a solution to move forward as a civilisation: the creation of a CERN for AI (Marcus, 2017). Similarly to the international collaboration in high-energy physics, which has pursued ambitious projects like the Large Hadron Collider used to discover the Higgs boson (CERN, 2012) and shared their results with the world (rather than restricting them to a single group), an international AI mission focused on teaching machines to read could genuinely change the world for the better.

In conclusion, Marcus perceives the issues with Al as parallel to those with climate change. He points out that we have a limited window to solve them and cannot count on governments unless society is mobilised. More importantly, the choices we make now will have lasting effects.

All in all, Marcus' lecture is food for thought to challenge the zeitgeist of the 2020s, which tends to jump with ease into new technology innovations without fully assessing the potential pitfalls and long-term vision.

What are your thoughts on Gary Marcus' vision?

References

Bilge, T., 2024. X (formerly Twitter). [Online] Available at: https://x.com/TolgaBilge_/status/1784022313198932376 [Accessed 19 06 2024].

CERN, 2012. How did we discover the Higgs boson?. [Online] Available at: https://home.cern/science/physics/higgs-boson/how#:~:text=The%20Higgs%20boson%20was%20discovered,second%2Dheaviest%20particle%20known%20today. [Accessed 19 06 2024].

Esvelt, K., 2023. X (formerly Twitter). [Online] Available at: https://x.com/kesvelt/status/1720633253927768486 [Accessed 19 06 2024].

Hinton, G., 2023. X (formerly Twitter). [Online] Available at: https://x.com/geoffreyhinton/status/1719447980753719543 [Accessed 19 06 2024].

Kahneman, D., 2011. Thinking, Fast and Slow. 1st ed. s.l.:Farrar, Straus and Giroux.

Krier, S., 2023. X (formerly Twitter). [Online] Available at: https://x.com/sebkrier/status/1720027057667592235 [Accessed 19 06 2024].

LeCun, Y., 2024. X (formerly Twitter). [Online] Available at: https://x.com/ylecun/status/1762628257801818263 [Accessed 19 06 2024].

Marcus, G., 2017. Artificial Intelligence Is Stuck: Here’s How to Move It Forward. New York Times. [Online] Available at: https://www.nytimes.com/2017/07/29/opinion/sunday/artificial-intelligence-is-stuck-heres-how-to-move-it-forward.html [Accessed 19 06 2024].

Marcus, G., 2024. Evidence that LLMs are reaching a point of diminishing returns — and what that might mean. [Online] Available at:

Marcus, G., 2024. Taming Silicon Valley: How We Can Ensure That AI Works for Us. 1st ed. s.l.:The MIT Press.

Schaake, M., 2023. X (formerly Twitter). [Online] Available at: https://x.com/MarietjeSchaake/status/1697140170552697248 [Accessed 19 06 2024].

Speed, A., 2023. Assessing the nature of large language models: A caution against anthropocentrism. arXiv preprint, Volume arXiv:2309.07683 .